Data Machina #190

Building Apps with GPT Models. Modern AI Stack. MIT Data-centric AI. OSS neural-net chess engine. Running LLMs on 1 single GPU. Modular Deep Learning. BigVGAN. ControlNet. MLOPs for AI at scale

Building GPT Models Apps with The Modern AI Stack. A colleague reckons that -arguably- there maybe <200 people in the world who can actually develop LLMs from scratch. Most of these people are employed by the BigTech Giants or left to join a handful of massively funded startups.

You need tens (hundreds?) of $ millions for funding: LLM experts, pre-training & training LLM models at scale with 10’s of billions of parameters and trillions of tokens, testing and productising the LLMs, running humongous compute infra… Timothy has estimated that it can cost $32 - $62 per 1 million parameters to just train a decent LLM. See: Counting the Cost of LLMs.

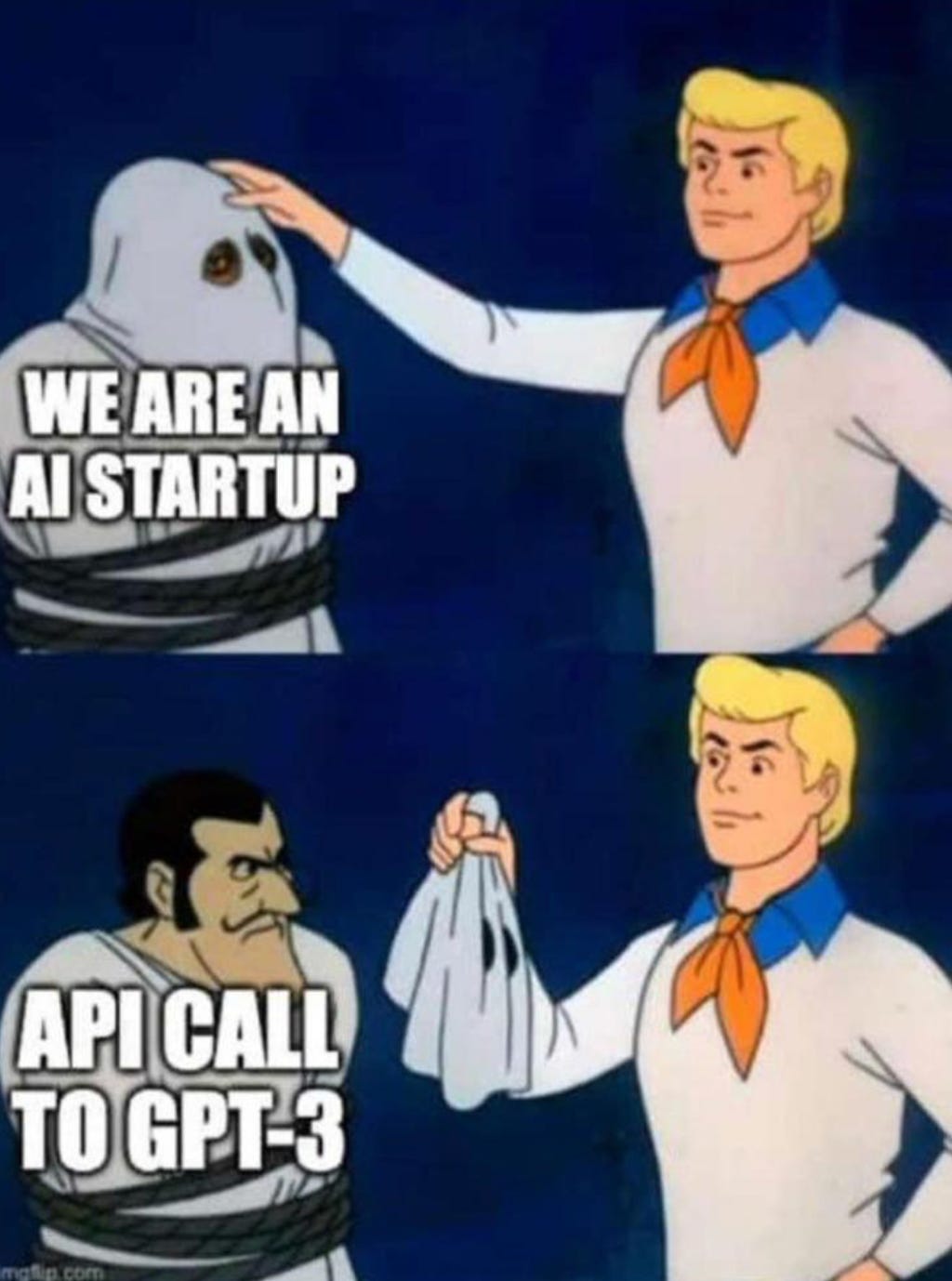

The Modern AI Stack emerging? There is a new breed of indie AI devs and pico AI startups that have fully embraced Open AI API and GPT models to quickly build nifty, snappy ChatGPT apps that generate good $$dough$$ recurrently.

Thousands of indie AI devs and pico AI startups that don’t need millions of VC money, and won’t use any of the packaged AI/ML & MLOps platforms from the giant cloud vendors. @Levelsio is a great example of an indie AI dev that built several income-generating AI apps in a few months.

I’ll pay for Open AI API, I’ll quickly build some wrappers, augmentations, around GPT models, and then I’ll add a 50% margin fee on top of Open AI’s pricing to charge my customers on a pay-per-use model

These indie AI devs and pico AI startups, are using a kind of Modern AI Stack that might look like this:

LLM models: Open AI, Cohere AI, BigScience Bloom, Eleuther AI, NVidia Nemo, GPT-J, Hugginface ,

Powerful embeddings, similarity/ vector search - Milvus, Pinecone, Weviate

ChatGPT augmentation, composability - LangChain, LlamaIndex (GPTIndex), MIT Augur Client

Efficient, cheap, fast ChatGPT: Colossal AI

ChatGPT Apps deployment: Dust

Prompt engineering: Everyprompt, Promptify, OpenPrompt, Betterprompt, Soaked, Dyno,

Here are some examples of ChatGPT apps built with the (or parts of) Modern AI Stack:

ChatGPT memory. Due to the so called window token size, ChatGPT has a limited short memory. Remembering and having long memory is crucial for building reliable conversational chatbots. LangChain has released a series of notebooks that augment & extend ChatGPT conversational memory.

Vector search and Open AI embedding model. You can extend ChatGPT with stuff like: search, clustering, recommendations, anomaly detection, classification… using text embeddings. For that you need some powerful vector search.

This is an interesting combo of GPT-3 and vector search. MrsStaxs, a Q&A Slack bot specialised in OpenStax "Principles of Macroeconomics 3e." In this case, it uses Weaviate for the vector search.

In this repo, Travis shows how to build a Youtube semantic search with Open AI text-embedding-ada-002, Pinecone, Vercel and Next.js.

This is a Python notebook, on how to build a retrieval enhanced generative Q&A system with Pinecone and OpenAI

This is another way to combine ChatGPT with embeddings in PostgresSQL for Q&A-ing documentation: Building Supabase Clippy: ChatGPT for Supabase Docs

Killer UI/UX for GPT apps. Having a scalable, fast, responsive UI/UX and front-end can make or break your GPT app. Here are a some cool examples:

Agent in the browser. This app extends ChatGPT with Weather API, News, Search, Wolfram Alpha, REST API.. It uses uses LangChain and GPTIndex. Checkout AgentHQ: a tool for developing AI agents in the browser.

Fast UX. This blog post, breaks down a full-stack template for building fast GPT-3 apps with Next.js and Vercel Edge Functions. Here they build a serverless bot for writing Twitter bios.

Responsive front-end. Using ChatGPT to build a Kedro MLOps pipeline. Talk with ChatGPT to build feature-rich app with a Streamlit frontend.

ChatGPT bundlers and packagers: some pico startups like ChatBase , Berry.ai and IngestAI.io are going like: build ChatGPT apps in minutes stuff.

Bringing [almost] all the bits & pieces of a GPT app together. This is an excellent post. It’s well structured and documented. It describes an e2e approach to Building GPT-3 applications — beyond the prompt (blog & code). From prototyping to finally releasing a GPT app that does “something useful” with a nice UI/UX. Brilliant!

Here’s some indoors entertainment suggestions for a lazy Sunday:

The long read: I really enjoyed reading Riccardo’s essay on Dante, Divina Commedia, ChatGPT, and the MLOps Cycle

Philosophy Q&A session: Cost of living crisis? How to live a stoic live? Ask Seneca This a chatbot that uses FastAPI, Qdrant, Sentence-Transformers & GPT3

Brain teaser: Perhaps grab a beer before reading this? On ChatGPT and the Analytic-Synthetic Distinction… Kantians rejoice!

Have a nice week.

10 Link-o-Troned

Reducing Overfishing w/ Stable Diffusion, GAN, R-CNN, Satellites

Cloud MLOps Pipeline for Prod-ready, RecSys w/ OSS NVidia Merlin

the ML Pythonista

the ML codeR

Deep & Other Learning Bits

AI/ DL ResearchDocs

El Robótico

data v-i-s-i-o-n-s

MLOps Untangled

AI startups -> radar

ML Datasets & Stuff

Postscript, etc

Tips? Suggestions? Feedback? email Carlos

Curated by @ds_ldn in the middle of the night.